Praktikum Kubernetes hari ke 1

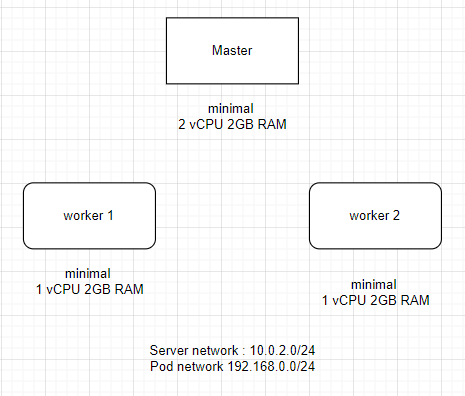

Topologi cluster kubernetes

Repository ubuntu 20.04 lokal

deb http://kebo.pens.ac.id/ubuntu/ focal main restricted universe multiverse

deb http://kebo.pens.ac.id/ubuntu/ focal-updates main restricted universe multiverse

deb http://kebo.pens.ac.id/ubuntu/ focal-security main restricted universe multiverse

deb http://kebo.pens.ac.id/ubuntu/ focal-backports main restricted universe multiverse

deb http://kebo.pens.ac.id/ubuntu/ focal-proposed main restricted universe multiverse

Praktikum

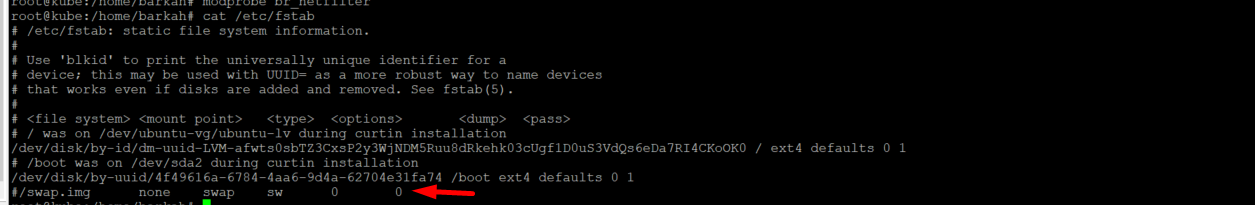

- Disable swap

pastikan swap sudah off (non permanen) menggunakan command

sudo swapoff -adisable swap secara permanen penggunaan swap

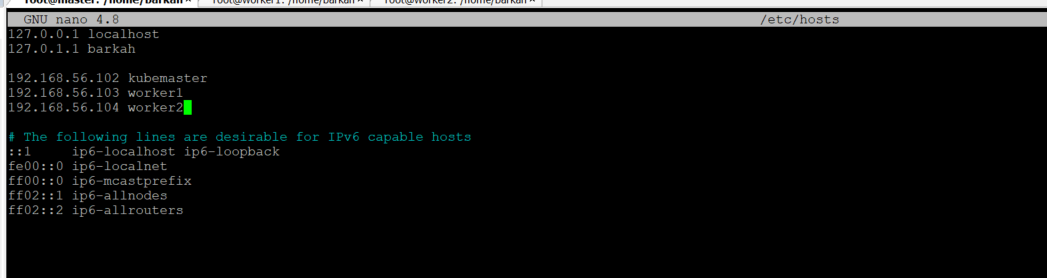

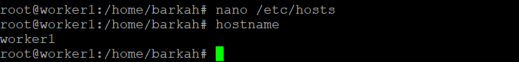

- Lakukan update konfigurasi /etc/hosts sesuai cluster ip anda di setiap server

cat <<EOF>> /etc/hosts

192.168.56.102 kubemaster

192.168.56.103 worker1

192.168.56.104 worker2

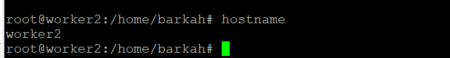

EOF- Update hostname sesuai fungsi masing-masing server

- Update dan aktifkan beberapa modul sesuai kebutuhan

cat > /etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

modprobe overlay

modprobe br_netfilter

# Mengatur parameter sysctl yang diperlukan, dimana ini akan bernilai tetap setiap kali penjalanan ulang.

cat > /etc/sysctl.d/99-kubernetes-cri.conf <<EOF

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

sysctl --system- Install kubadm, kubelet and kubectl

kubeadm: perintah untuk mem-bootstrap cluster.

kubelet: komponen yang berjalan pada semua mesin di cluster Anda dan melakukan hal-hal seperti memulai pod dan container.

kubectl: baris perintah yang digunakan untuk berkomunikasi dengan cluster Anda.

-

- Update paket dan library yang diperlukan dari repositori

sudo apt-get update && sudo apt-get install -y apt-transport-https curl - Download the public signing key untuk Kubernetes dari paket repositori

curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add - - Tambahkan appropriate Kubernetes pada

aptrepository

- Update paket dan library yang diperlukan dari repositori

cat <<EOF | sudo tee /etc/apt/sources.list.d/kubernetes.list

deb https://apt.kubernetes.io/ kubernetes-xenial main

EOF4. lakukan instalasi

sudo apt-get update

sudo apt-get install -y kubelet kubeadm kubectl

sudo apt-mark hold kubelet kubeadm kubectl- Install runtime (Containerd)

Untuk menjalankan Container pada Pod, Kubernetes menggunakan runtime Container

Install containerd

# (Meninstal containerd)

## Mengatur repositori paket

### Install packages to allow apt to use a repository over HTTPS

apt-get update && apt-get install -y apt-transport-https ca-certificates curl software-properties-common

## Menambahkan key GPG resmi dari Docker:

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | apt-key add -

## Mengatur repositori paket Docker

add-apt-repository \

"deb [arch=amd64] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) \

stable"

## Menginstal containerd

apt-get update && apt-get install -y containerd.io

# Mengonfigure containerd

mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

# Menjalankan ulang containerd

systemctl restart containerd

systemctl enable containerd

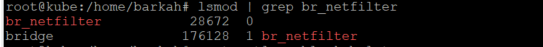

systemctl status containerd- Inisialisasi control plane

pastikan modul br_netfilter aktif menggunakan command berikut

lsmod | grep br_netfilteraktifkan layanan kubelet menggunakan command berikut

systemctl enable kubeletinisialisasi control plane

root@master:/home/barkah# kubeadm init

I0110 07:36:38.348918 12801 version.go:256] remote version is much newer: v1.29.0; falling back to: stable-1.28

[init] Using Kubernetes version: v1.28.5

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

W0110 07:38:11.519367 12801 checks.go:835] detected that the sandbox image "registry.k8s.io/pause:3.6" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.k8s.io/pause:3.9" as the CRI sandbox image.

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master] and IPs [10.96.0.1 10.0.2.15]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master] and IPs [10.0.2.15 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master] and IPs [10.0.2.15 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 15.509326 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: 2zs2sf.mwbg4kfwo6fvgafq

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.0.2.15:6443 --token 2zs2sf.mwbg4kfwo6fvgafq \

--discovery-token-ca-cert-hash sha256:eaad0b4086b48fd32c2cea47f3a362adb6b7cf6f86f8323ffd14afbbf6574b00

setelah melakukan boostrap cluster baru, lakukan update konfigurasi agar serevr bisa membaca cluster yang baru dibuat

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

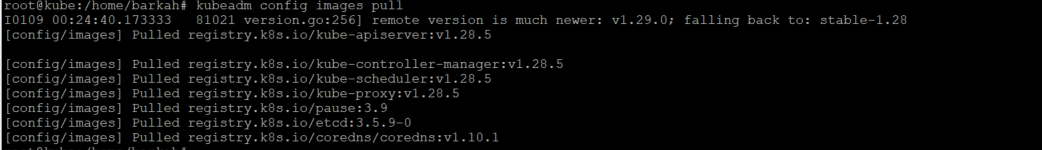

sudo chown $(id -u):$(id -g) $HOME/.kube/configdownload image menggunakan perintah kubeadm

kubeadm config images pullcek config cluster kubernates

root@master:/home/barkah# kubectl config view

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://10.0.2.15:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kubernetes-admin

name: kubernetes-admin@kubernetes

current-context: kubernetes-admin@kubernetes

kind: Config

preferences: {}

users:

- name: kubernetes-admin

user:

client-certificate-data: DATA+OMITTED

client-key-data: DATA+OMITTED

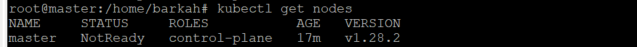

cek status node master

status NotReady ini perlu install network plugin terlebih dahulu

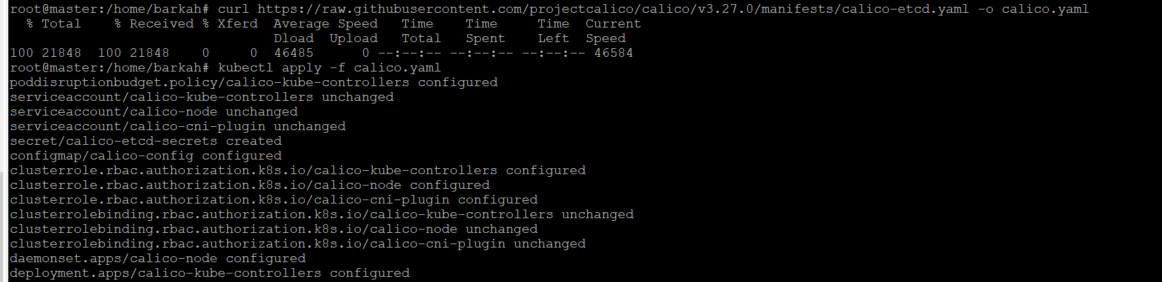

- Install calico network plugin

# opsi pertama

curl https://raw.githubusercontent.com/projectcalico/calico/v3.27.0/manifests/calico-etcd.yaml -o calico.yaml

kubectl apply -f calico.yaml

# opsi kedua

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.27.0/manifests/tigera-operator.yaml

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.27.0/manifests/custom-resources.yaml

#opsi ke 3

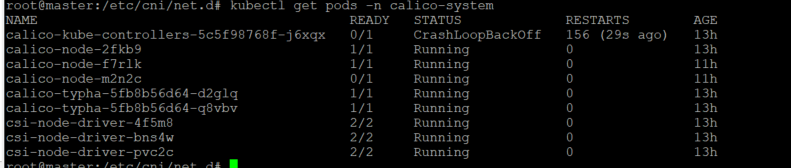

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml Cek status calico network dengan command kubectl get pods -n calico-system

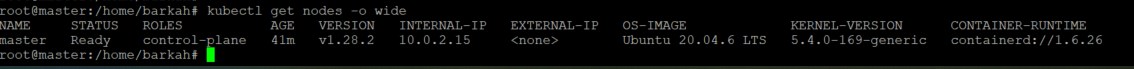

Cek status master nodes

- Join worker to cluster

lakukan join worker ke cluster, jika luka dengan token yang sudah di generate bisa dicek menggunakan command berikut

root@master:/etc/cni/net.d# kubeadm token create --print-join-command

kubeadm join 192.168.56.102:6443 --token 10fsnh.l1awefmlni5n1jb0 --discovery-token-ca-cert-hash sha256:7edb42f34a5d872016f02a13481ca6e9e98d0e0bea79f9ec59257c6fc8cdbdd5lakukan join ke cluster

root@worker1:/home/barkah# kubeadm join 192.168.56.102:6443 --token gnr4zr.9zn6ktq2zrloplbd --discovery-token-ca-cert-hash sha256:7edb42f34a5d872016f02a13481ca6e9e98d0e0bea79f9ec59257c6fc8cdbdd5

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.Install calico network pada worker

root@worker1:/home/barkah# kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/tigera-operator.yaml

curl https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/custom-resources.yaml -O

kubectl create -f custom-resources.yamlThe connection to the server localhost:8080 was refused - did you specify the right host or port?

root@worker1:/home/barkah#

root@worker1:/home/barkah# curl https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/custom-resources.yaml -O

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 824 100 824 0 0 1375 0 --:--:-- --:--:-- --:--:-- 1375

root@worker1:/home/barkah#

root@worker1:/home/barkah# kubectl create -f custom-resources.yamlCek calico pods

root@worker1:~/.kube# kubectl get pods -n calico-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-5c5f98768f-j6xqx 0/1 CrashLoopBackOff 160 (4m24s ago) 13h

calico-node-2fkb9 1/1 Running 0 13h

calico-node-f7rlk 1/1 Running 0 11h

calico-node-m2n2c 0/1 Running 0 11h

calico-typha-5fb8b56d64-d2glq 1/1 Running 0 13h

calico-typha-5fb8b56d64-q8vbv 1/1 Running 0 13h

csi-node-driver-4f5m8 2/2 Running 0 13h

csi-node-driver-bns4w 2/2 Running 0 13h

csi-node-driver-pvc2c 2/2 Running 0 13hCek status cluster di master dan worker

root@worker1:~/.kube# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane 14h v1.28.2

worker1 Ready worker 13h v1.28.2

worker2 Ready worker 13h v1.28.2Untuk melihat detail cluster bisa ketik perintah

root@worker1:~/.kube# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

master Ready control-plane 14h v1.28.2 10.0.2.15 <none> Ubuntu 20.04.6 LTS 5.4.0-169-generic containerd://1.6.26

worker1 Ready worker 13h v1.28.2 192.168.56.103 <none> Ubuntu 20.04.6 LTS 5.4.0-169-generic containerd://1.6.26

worker2 Ready worker 13h v1.28.2 192.168.56.104 <none> Ubuntu 20.04.6 LTS 5.4.0-169-generic containerd://1.6.26

Untuk melihat detail system

root@worker1:~/.kube# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5dd5756b68-46vkp 0/1 Running 0 14h

coredns-5dd5756b68-k4cwj 0/1 Running 0 14h

etcd-master 1/1 Running 0 14h

kube-apiserver-master 1/1 Running 0 14h

kube-controller-manager-master 1/1 Running 0 14h

kube-proxy-hhtwq 1/1 Running 0 14h

kube-proxy-tgrwb 1/1 Running 0 13h

kube-proxy-x2lmd 1/1 Running 0 13h

kube-scheduler-master 1/1 Running 1 14hReferensi

- https://dev.to/admantium/kubernetes-with-kubeadm-cluster-installation-from-scratch-51ae

- https://computingforgeeks.com/deploy-kubernetes-cluster-on-ubuntu-with-kubeadm/#1-step-1-install-kubernetes-servers

- https://admantium.medium.com/kubernetes-with-kubeadm-cluster-installation-from-scratch-810adc1b0a64

- https://devopscube.com/setup-kubernetes-cluster-kubeadm/